Mark Zuckerberg on Trial: 3 Design Choices That Could Redefine Social Media Liability

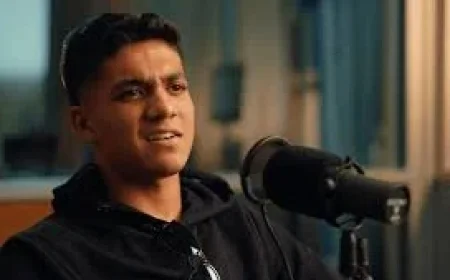

In a New Mexico courtroom, jurors are hearing a dispute that hinges less on slogans about “safety” and more on how product decisions were justified inside a company built on engagement. In a taped deposition played to the jury, mark zuckerberg rejected claims that Facebook and Instagram are “addictive, ” while prosecutors pressed him with internal communications and user emails dating back to 2008. The case is being framed as a bellwether that could influence thousands of similar lawsuits—and it is testing where product design ends and legal responsibility begins.

Mark Zuckerberg and the addictiveness claim: a battle over definitions and disclosures

The New Mexico attorney general alleges Meta violated state consumer protection laws by failing to disclose what it knew about the dangers of addiction to social media and child sexual exploitation on its platforms. Meta disputes the allegations, arguing it discloses risks and works to remove harmful content and experiences, even while acknowledging that some bad material still gets through. In the deposition recorded last year and shown during the trial’s fourth week, prosecutors challenged mark zuckerberg on whether users had repeatedly told the company and him personally that the products felt addictive.

Zuckerberg pushed back on the term itself, saying people sometimes use “addictive” colloquially and adding, “That’s not what we’re trying to do with the products, and it’s not how I think they work. ” At the same time, he said he wants to understand these experiences to improve products in ways people want.

The argument is not merely semantic. If the dispute becomes a question of what Meta knew and what it disclosed, jurors may be asked to weigh internal discussion against public posture. The core tension: consumer protection claims often turn on what risks were known, how they were communicated, and whether product choices reflected those risks.

Deep analysis: three design-and-policy choices now central to liability questions

1) Engagement goals and “time spent”

In the deposition, Zuckerberg conceded he initially set goals for employees to increase the amount of time teenagers spent on Meta’s platforms, amid efforts to expand business revenue and users. He described “time spent” as one of the major engagement goals, then said that starting “sometime during 2017 and beyond, ” Meta focused on other metrics. For the trial narrative, this matters because plaintiffs are positioning engagement optimization as a design choice with foreseeable downsides for young users—while the defense is likely to emphasize subsequent shifts and safety efforts.

2) Recommendations and unwanted interactions

Recorded depositions from Zuckerberg and Instagram leader Adam Mosseri were played at trial on Tuesday and Wednesday, as prosecutors pursued claims that harms to children—such as sexual exploitation and detriments to mental health—are inevitable at Meta’s scale. The court heard that Meta identified the “People you may know” algorithm as a main driver of sexually inappropriate interactions with children, with the tool used to discover victims in 79% of identified cases in 2018. Prosecutors also presented evidence that Meta estimated in 2020 that 500, 000 children were receiving sexually inappropriate communications on Instagram each day, including grooming. Meta responded that the technology used at the time was overly wide and cautious, capturing interactions that were not inappropriate.

This is where product architecture becomes central: if recommendations can be shown to systematically connect adults to minors in risky ways, that can shift debate from isolated bad actors to platform-enabled pathways. Separately, prosecutors questioned Mosseri about policies for young users that might contribute to unwanted communications with adults.

3) Privacy, encryption, and limits of “perfect” safety

In a taped deposition, Zuckerberg said criminal behavior on Meta’s platforms is an unfortunate reality when “serving billions of people, ” arguing that the standard should not assume perfection. Jurors also heard Zuckerberg authorized end-to-end encryption for Facebook Messenger in 2023 despite warnings from child safety groups Thorn and the National Center for Missing and Exploited Children (NCMEC) that the move could pose risks to children; he said privacy was a more pressing issue. Encryption prevents anyone other than the sender and intended recipient from viewing messages and keeps content from being stored on Meta’s servers.

The legal and policy fault line is clear even on the record presented at trial: reducing visibility into private messaging can restrict detection, yet it can also be defended as a privacy protection. How jurors interpret that tradeoff—especially against allegations of knowingly enabling predators—could shape how future courts treat “safety by design” claims against private communications tools.

Expert perspectives and institutional stakes inside the courtroom

Several key voices are on the record through the trial proceedings and depositions shown to jurors. Raul Torrez, New Mexico Attorney General, alleges Meta put profits and user engagement over child safety, accusing the company of knowingly enabling predators to exploit children on Facebook and Instagram. On the defense side, a Meta spokesperson said the company has “strict, longstanding rules against child exploitation” and has invested billions to fight it through proactive detection technology and safety features designed to prevent harm, while also emphasizing transparency around content removals and acknowledging no system can be perfect.

Inside the depositions, Adam Mosseri, Head of Instagram, faced questions about Meta’s approach to safety, corporate profits, and features affecting younger users. Meanwhile, prosecutors directly challenged mark zuckerberg with internal materials and past user communications, attempting to tie leadership-level awareness to product direction.

The institutional stakes stretch beyond this single trial. The New Mexico case, along with a separate trial in Los Angeles referenced in court, could set the course for thousands of similar lawsuits against social media companies. Zuckerberg has also answered questions from Congress about youth safety on Meta’s platforms and, during 2024 congressional testimony, apologized to families who said tragedies were linked to harms on Meta’s services.

Regional and global implications: what a New Mexico verdict could change

Meta’s apps—Facebook, Instagram, and WhatsApp—each have 3 billion monthly active users, placing any courtroom-driven change in the category of global product governance rather than local compliance. If the New Mexico jury accepts arguments that certain features, metrics, or recommendation systems created undisclosed consumer risks, the pressure on other platforms to document internal risk awareness and adjust design choices could intensify.

Meta also points to changes it introduced, including teen accounts with default protections that debuted in 2024. Whether jurors view such measures as meaningful safeguards or as insufficient relative to earlier internal warnings may influence not only outcomes in New Mexico, but the negotiating posture in future cases that test how product liability concepts apply to social media design.

The question left hanging over the industry

The trial is exposing a core paradox: a platform can argue it is not “trying” to produce addictiveness or harm, yet still be challenged on whether its systems predictably create those outcomes at scale. For mark zuckerberg, the deposition record shown to jurors puts leadership intent, engagement strategy, and safety tradeoffs into a single frame. As more lawsuits line up behind this bellwether, the unresolved question is whether courts will treat these product decisions as inevitable imperfections of serving billions—or as design choices that carry a duty to warn, redesign, and disclose more than companies have been willing to concede.