Google Unveils AI-Designed 7th-Gen TPU: 4x Faster Performance

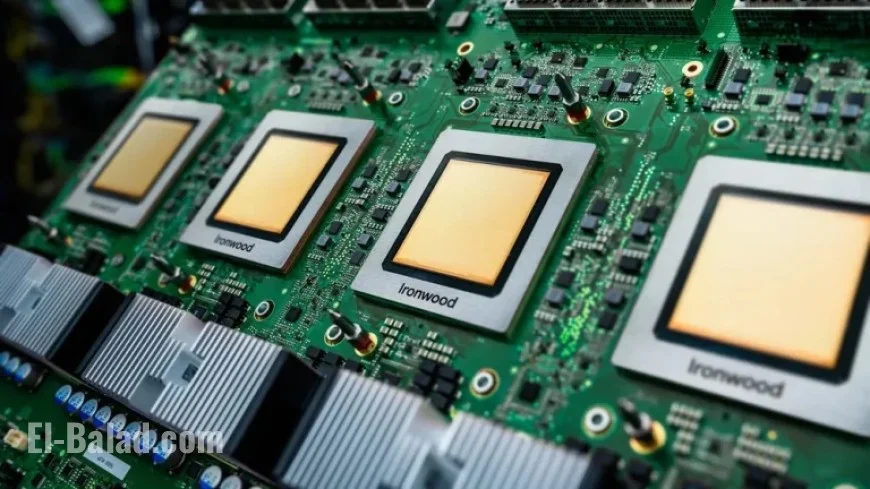

Google has recently unveiled its seventh-generation Tensor Processing Unit (TPU), named Ironwood. This new chip is designed for high-speed AI inference, offering remarkable efficiency and performance improvements.

Key Features of Ironwood TPU

- Enhanced Performance: Ironwood delivers over four times the performance compared to its predecessor, significantly improving both AI training and inference capabilities.

- Purpose-Built Design: Unlike earlier models focused on training AI, Ironwood is specifically engineered for inference, which is the real-time processing of AI queries.

- High Bandwidth Memory: The TPU supports up to 1.77 Petabytes of High Bandwidth Memory, facilitating faster data access for complex tasks.

Networking and Clustering

Google has developed Ironwood to operate within extensive supercomputer networks. The chip can be clustered with up to 9,216 others in a single domain. This capability is enabled by a high-speed network operating at 9.6 Tb/s, which eliminates traditional data bottlenecks.

AI-Designed Innovation

Remarkably, Ironwood’s architecture was developed using an AI methodology known as AlphaChip. Google researchers applied reinforcement learning techniques to optimize the chip’s design. This innovative approach exemplifies how AI can enhance hardware development, which in turn benefits AI applications.

Availability

Ironwood is currently available to Google Cloud customers, marking a significant milestone in Google’s strategy to dominate the AI sector. The launch underscores the company’s commitment to advancing AI technology through superior hardware solutions.