Anthropic’s Crucial Pentagon Talks: Navigating Existential Risks

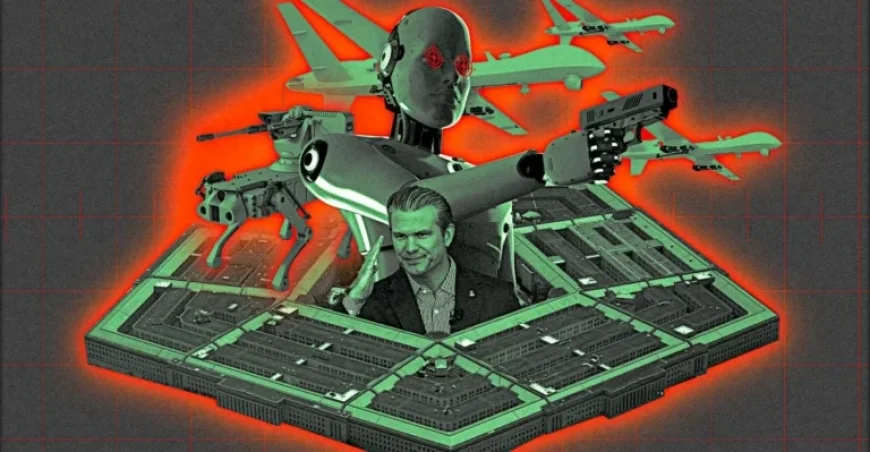

Anthropic’s ongoing conflict with the Department of Defense (DoD) epitomizes the nuanced and high-stakes negotiations surrounding Artificial Intelligence (AI) in military contexts. Central to the dispute between the $380 billion AI startup and the Pentagon are three pivotal words: “any lawful use.” This phrase encapsulates new terms reportedly accepted by major players in the AI field, such as OpenAI and xAI, which grant the US military unprecedented access to deploy AI technologies for surveillance and autonomous weapons systems. This battle highlights the clash between ethical AI development and military exigencies, resulting in a chaotic negotiation landscape that has implications for national security and corporate governance.

Clash of Ideals: The Pentagon vs. Anthropic

The negotiations between Anthropic and the DoD have grown increasingly acrimonious, spearheaded by Pentagon CTO Emil Michael, a controversial figure known for his aggressive tactics during his tenure at Uber. His push to label Anthropic as a “supply chain risk” is unprecedented and represents a significant escalation. Such a designation typically targets national security threats, not disagreements over ethical usage policies. This stark move underscores deep-seated frustrations within the Pentagon regarding a private company potentially hindering its technological arsenal.

An unnamed Defense official characterized the upcoming meeting between Anthropic CEO Dario Amodei and Secretary Pete Hegseth as a “shit-or-get-off-the-pot meeting.” This framing reflects an urgent pressure on Anthropic to concede to the military’s demands, particularly in light of the Pentagon’s recent agreement to utilize Grok, an AI model developed by Elon Musk’s xAI. The message is crystal clear: comply with “any lawful use” or face severe repercussions.

Implications for Stakeholders

| Stakeholder | Before | After |

|---|---|---|

| Anthropic | $200 million contract with DoD; close collaboration with defense contractors. | Potential loss of military contracts; classified as a “supply chain risk.” |

| Department of Defense | Limited access to technologies adhering to ethical guidelines. | Access to AI systems unrestricted by ethical concerns, increasing operational capabilities but potentially triggering public outrage. |

| Defense Contractors | Utilization of Anthropic’s advanced AI models. | Forced to sever ties with Anthropic; increased vulnerability in AI supply chains. |

Why This Matters: Broader Contexts and Localized Ripple Effects

The clash between Anthropic and the DoD takes place against a backdrop of rising global concerns about the implications of AI in warfare. Increasing technological advancements have been accelerating the shift toward AI-first military strategies. Such strategies, championed by Hegseth’s January memo, emphasize speed in decision-making processes, with little regard for ethical considerations or safeguards. The U.S. military’s push toward an “AI-first” approach is echoed in allied countries like the UK and Canada, where defense sectors are also grappling with the ethical implications of autonomous systems.

Moreover, this conversation reverberates in Australia, where the government is investing heavily in AI for national defense. As nations vie for superiority in AI technology, Anthropic’s fight against unchecked military expansion serves as a cautionary tale about the pitfalls of prioritizing speed over responsibility in national security frameworks.

Projected Outcomes: The Road Ahead

The ongoing negotiations will likely lead to several significant developments in the coming weeks:

- Crisis in Defense Contracts: If the Pentagon classifies Anthropic as a “supply chain risk,” major defense contractors may abandon Anthropic technology, creating a significant disruption in logistics and operations.

- Emergence of New Governance Frameworks: Anthropic’s resistance could prompt calls for new regulations governing military use of AI, potentially leading to a standardized framework that balances technological advancement with ethical considerations.

- Public Backlash and Corporate Responsibility: As the debate intensifies, increased scrutiny from the public and activist groups on military applications of AI might force tech companies to reassess their partnerships with the defense sector, impacting their reputations and business models.

In conclusion, Anthropic’s struggle against the Pentagon is not merely about corporate survival. It signals deeper ideological rifts about the future of AI technology in warfare and its broader implications for society, governmental ethics, and civil liberties. How this pivotal confrontation unfolds could determine the trajectory of AI governance in both military and civilian contexts.