Pentagon-Company Clash Over AI Weapons: Inside the Anthropic Dispute

The ongoing standoff between the artificial intelligence firm Anthropic and the Pentagon has escalated significantly this week. Starkly contrasting accounts emerged regarding a discussion on a hypothetical nuclear strike against the United States. This discrepancy not only illustrates the friction between technological innovation and military ethics but also highlights the strategic motivations driving both parties in this high-stakes confrontation over lethal autonomous weapons.

Dissecting the Pentagon-Anthropic Showdown

The heart of the matter lies in how each entity perceives the future role of AI in military operations. For the Pentagon, autonomous weapons represent an opportunity to revolutionize defense capabilities, potentially reducing human casualties. Conversely, Anthropic, prioritizing ethical considerations, views military applications of their technology with skepticism. This conflict signifies more than just a business rivalry; it unveils a deeper tension surrounding the regulation and ethical implications of AI in warfare.

Table: Stakeholder Impact Analysis

| Stakeholder | Before | After |

|---|---|---|

| Pentagon | Grasping for innovative military strategies | Facing public scrutiny and internal tensions over AI ethics |

| Anthropic | Positioned as a leader in AI development | Under pressure to clarify its ethical stance |

| U.S. Public | Generally unaware of AI military applications | Increasing concern over ethical implications of AI in warfare |

| Global Allies | Monitoring U.S. military advancements | Pondering implications for international security protocols |

This standoff reflects the broader global climate surrounding the militarization of AI technology. As nations race to enhance their military capabilities, ethical debates intensify, creating a precarious atmosphere for technological firms like Anthropic. The balance between innovation and ethics is now under the microscope, not only in the U.S. but across the globe.

Localized Ripple Effects

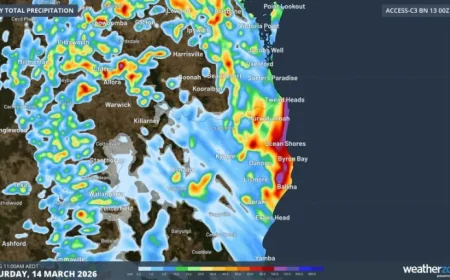

The tension between Anthropic and the Pentagon resonates far beyond U.S. borders. In the UK, discussions around AI weaponization spur debates in Parliament regarding military ethics. In Canada, the government faces pressure to regulate AI technologies effectively, considering potential applications in defense sectors. Meanwhile, in Australia, the dialogue is mirrored in efforts to align military modernization with ethical AI guidelines. Each country grapples with similar questions about security and morality, reflecting a broader anxiety about the implications of AI on warfare.

Projected Outcomes

As this situation unfolds, several critical developments are likely to take shape:

- Increased Regulatory Scrutiny: Expect heightened governmental oversight of AI technologies in military applications, potentially leading to new laws governing their use.

- Public Engagement Initiatives: Both the Pentagon and AI firms may ramp up public awareness campaigns to address concerns regarding autonomous weapons, shifting the narrative towards a more transparent dialogue.

- Strategic Partnerships: Anthropic may seek alliances with civil rights organizations to bolster its ethical standing, while the Pentagon could collaborate with tech firms to align military objectives with ethical standards.

This standoff reflects a crucial juncture in the intersection of artificial intelligence, ethics, and military strategy, as all involved must navigate the minefield of public opinion, regulatory landscapes, and international relations.