Ronan Farrow and the 2023 OpenAI Firing: 5 Revelations Behind the Power Struggle

ronan farrow has become central to a debate that is no longer only about one executive’s fate, but about who gets to shape technologies with widening influence. In the account at issue, the dispute over Sam Altman turned on a deeper question: whether a company built around extraordinary power can remain accountable when its leaders are themselves under suspicion. The answer matters because the story reaches beyond corporate drama and into the rules governing AI products, military use, and public trust. What happened inside OpenAI now reads like a test of whether guardrails can survive ambition.

Why the OpenAI dispute matters now

The immediate issue is not simply that OpenAI’s board moved against Altman in 2023. It is that the company was founded on a premise unlike ordinary tech firms: safety had to outrank revenue, and the chief executive had to meet a higher ethical standard. That premise collapsed into conflict when board members concluded they could not trust the person at the center of the project. In the memos described, Altman was accused of misrepresenting facts to executives and board members and of deceiving them about internal safety protocols. One memo opened with a blunt judgment: “Sam exhibits a consistent pattern of… ” followed by “Lying. ”

The significance lies in the mismatch between the scale of the technology and the fragility of the governance. The company’s products now reach into smartphones, defense contracts, and law enforcement. At the same time, the organization’s market value and commercial momentum create pressure to keep advancing. That tension makes the trust question central, not incidental. If leadership can shape systems that may influence work, security, and public decision-making, then the credibility of those leaders is not a side issue; it is part of the risk structure itself.

Inside the boardroom: what the memos suggest

The internal effort to document concerns was unusually deliberate. Ilya Sutskever, the chief scientist, sent secret memos to board members after weeks of private discussions about Altman and Greg Brockman. The files included roughly seventy pages of Slack messages and human resources documents, plus explanatory text and cellphone images apparently used to avoid detection on company devices. The final memos were sent as disappearing messages so that no one else would see them. One board member described Sutskever as terrified.

That sequence suggests more than a routine corporate dispute. It points to a board convinced that the organization’s founding logic required an exceptional standard of honesty from its chief executive. Helen Toner, an A. I. -policy expert, and Tasha McCauley, an entrepreneur, reportedly saw the material as confirmation of their own concerns. The board’s authority to fire the C. E. O. existed precisely because the nonprofit structure was supposed to keep humanity’s safety above company success. In that sense, the firing was a governance event shaped by the company’s own design.

Ronan Farrow and the trust question at the center of AI power

ronan farrow’s reporting sharpens the larger issue: what happens when a company with a public mission becomes so powerful that its own board doubts the person running it? The answer matters because the company’s stated commitments now coexist with a much broader footprint. The text describes a later Pentagon agreement for classified AI operations, plus public assurances that the arrangement had more guardrails than previous classified deployments. Yet the same company also framed its work as strongly pro-democracy and supportive of collaboration with the democratic process.

That combination is what makes the story analytically unstable. A company can promise safeguards, but if the board once judged its chief executive unreliable, then every promise of restraint carries a governance problem behind it. The issue is not whether AI should advance; it already is. The issue is whether the people directing that advance can be held to the standards their power requires. In this context, ronan farrow is less a headline than a lens for examining whether modern AI leadership has outgrown the ordinary corporate trust model.

Expert perspectives and the broader stakes

The context includes a warning from Sutskever himself: anyone building civilization-altering technology carries “a heavy burden” and “unprecedented responsibility. ” That is not a casual remark; it is the logic on which the company was founded. It also frames why members of the board believed the C. E. O. should be removable if he failed the candor test.

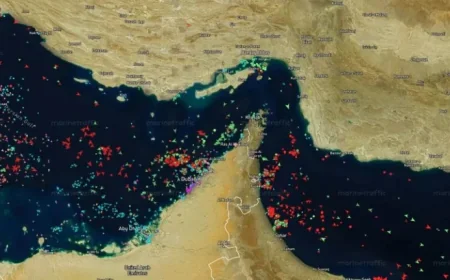

Jake Laperruque of Tech Policy adds another layer through his observation that the company’s stated “red lines” on mass domestic surveillance, direct autonomous weapons systems, and high-stakes automated decisions appear similar to the boundaries that led another proposed agreement to fail. His point underscores the fragility of public assurances when the same technologies can move between commercial, governmental, and security uses.

Regional and global implications for AI governance

The ripple effects are wider than one company or one boardroom. If AI products continue spreading into public-sector and defense uses, then the standards governing leadership integrity become a global policy issue. Governments, regulators, and institutional customers will all face the same basic question: can they rely on a company’s promises if the company’s own overseers once concluded the top executive was not consistently candid?

That question is especially consequential because the company’s reach is no longer abstract. It extends into labor markets, electricity demand, datacentres, and state power. The broader consequence is that AI governance may need to be built less around trust in charismatic founders and more around durable institutional checks. In that sense, ronan farrow’s reporting points to a larger truth: the future of AI may depend not only on what the systems can do, but on who is allowed to direct them. And if the next chapter is written under that pressure, who will prove worthy of the trust the technology demands?