Roblox and the new age-based safety shift as early June approaches

Roblox is entering a clear turning point as it prepares to roll out age-based accounts and expanded parental controls for users under 16 in early June ET. The move comes amid rising pressure over child safety, while also signaling that the platform wants to make age, content access, and communication settings work together more tightly than before.

What Happens When Age Checks Become the Default?

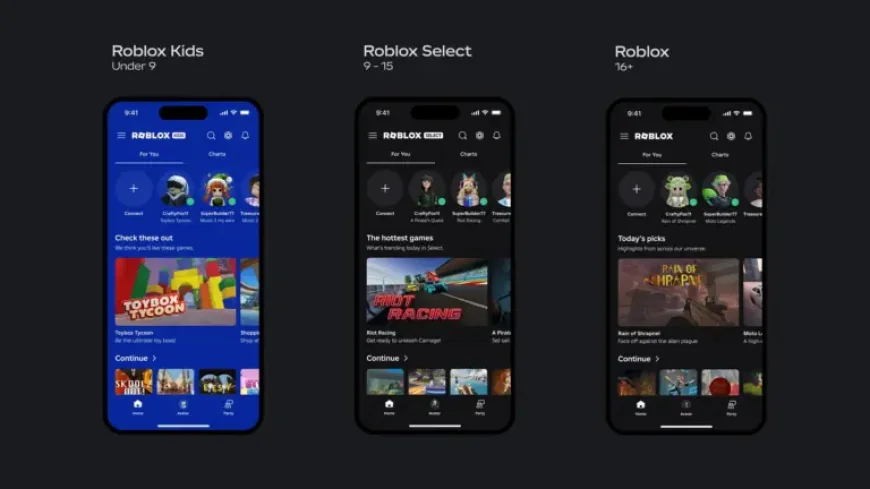

The new framework introduces two age-based account types: Roblox Kids for ages 5 to 8, and Roblox Select for ages 9 to 15. Users who complete age checks, or are verified by a parent, will be routed into the appropriate account type, with automatic transitions as they age. Roblox Kids will disable communication by default and limit access to games with Minimal or Mild content labels. Roblox Select will allow access to games labeled up to Moderate, while keeping default communications settings unchanged for that age band.

The company is also adding a continuous selection process for games available to users under 16. That matters because it shifts safety from a one-time filter to an ongoing framework. Roblox says age-checked users under 16 should still have access to the vast majority of their favorite games at launch, while users 16 and older will not see changes to their experience.

What Changes for Parents and Younger Users?

For parents, the update appears designed to make controls easier to understand and more directly tied to age. Expanded parental tools will include the ability to block specific games, manage direct chat, and approve games outside a child’s usual age bracket. For younger users, the experience will become more segmented, with distinct visual cues that signal whether an account is Roblox Kids or Roblox Select.

| Account type | Age range | Game access | Communication |

|---|---|---|---|

| Roblox Kids | 5 to 8 | Minimal or Mild | Disabled by default |

| Roblox Select | 9 to 15 | Up to Moderate | Default settings remain unchanged |

| Standard account | 16 and older | No change stated | No change stated |

This matters because the company is no longer presenting child safety as a single control panel. It is building a layered system in which content, communication, moderation, and parental oversight are linked.

What Forces Are Reshaping Roblox’s Safety Strategy?

Roblox is responding to a combination of scrutiny and scale. The platform has faced increased pressure over perceived risks for children, including grooming and exposure to inappropriate user-generated content. It has also been investing heavily in the product itself, with new AI tools for creators and a subscription service intended to support revenue and engagement. Those two tracks, trust and growth, now have to move together.

The company says all content already passes existing moderation systems, including AI asset scanning, ongoing user report review, and multimodal moderation that evaluates scenes in real time. For users under 16, an additional continuous process will apply developer verification, extended evaluation, and tighter limits on content better suited to older audiences. That suggests the safety model is becoming more dynamic, but it also means implementation quality will be crucial.

What Are the Most Likely Outcomes?

Three scenarios stand out from the current signals:

- Best case: The new age-based structure reduces confusion for parents, improves trust, and keeps most younger players inside the games they already use.

- Most likely: Roblox improves its safety posture, but parents remain cautious and the company has to keep refining controls as users move between age brackets.

- Most challenging: Gaps in enforcement or user workarounds weaken confidence, keeping the child-safety debate alive even after rollout.

The most important variable is whether the new model feels seamless to families while still being strict enough to address concerns. If it does, Roblox can argue that safety is becoming part of the platform’s architecture rather than an add-on.

What Happens When Safety and Growth Collide?

There will be winners and losers in this shift. Parents gain more control and clearer defaults. Younger users may gain a safer, more age-aligned experience, though with tighter limits. Developers whose games fit the younger age bands could benefit from stronger visibility inside the curated catalogs. Games that rely on older audiences may face more scrutiny and fewer pathways into child-facing spaces.

For Roblox itself, the upside is reputational: a stronger case that it is acting on child-safety concerns instead of reacting to them. The risk is operational: the more precise the system becomes, the more it must prove that age checks, moderation, and parental controls work consistently in practice.

What readers should take away is that Roblox is moving into a more mature phase of platform governance. The company is trying to make trust measurable through age-based defaults, ongoing selection, and expanded controls. If the rollout works, it could reset expectations for how large user-generated platforms manage younger audiences. If it does not, the debate over safety will only sharpen. In either case, roblox is now a test of whether scale and child protection can be aligned without slowing the platform’s growth.