Openai Gpt 5.5: 7 Signals Behind OpenAI’s New Model and NVIDIA’s Fast-Track Rollout

Openai Gpt 5. 5 is arriving with an unusually practical message: the newest frontier model is not being framed as a lab milestone, but as an operating tool for coding, security, and enterprise workflow. Its first visible deployment inside Codex, OpenAI’s agentic coding application, shows how the model is being positioned for work that demands speed, auditability, and scale. That matters because the rollout is already tied to NVIDIA GB200 NVL72 systems, where performance gains are being measured in cost, output, and time saved.

Why Openai Gpt 5. 5 matters now

The significance of Openai Gpt 5. 5 is not just that it exists, but that it is being introduced through a use case built around real work. Inside NVIDIA, more than 10, 000 employees across engineering, product, legal, marketing, finance, sales, human resources, operations, and developer programs are already using GPT-5. 5-powered Codex. The company says the results have been described internally as “mind-blowing” and “life-changing. ” Those are subjective terms, but the operational shift is concrete: debugging cycles that once took days are shrinking to hours, and work that previously stretched over weeks is now moving overnight in complex, multi-file codebases.

This is why the launch reads as more than a product update. It signals that frontier AI is being judged increasingly by whether it can be deployed safely inside enterprise systems and still improve throughput. In that sense, Openai Gpt 5. 5 is being tested not in theory, but in workflows where reliability, permissions, and audit trails matter as much as raw model capability.

What the NVIDIA deployment reveals

The infrastructure details are central to the story. Codex is running on NVIDIA GB200 NVL72 rack-scale systems, and NVIDIA says that setup is capable of delivering 35x lower cost per million tokens and 50x higher token output per second per megawatt compared with prior-generation systems. Those figures, if sustained in deployment, point to a larger shift: frontier-model inference is becoming more viable at enterprise scale because economics are catching up with ambition.

The security model is equally telling. The Codex app supports remote Secure Shell connections to approved cloud virtual machines, allowing agents to work with real company data without exposing it externally. NVIDIA IT has rolled out cloud virtual machines for every employee to run the agent safely, creating a dedicated sandbox while maintaining full auditability. Access to production systems is read-only, and the deployment operates under a zero-data retention policy. In practical terms, Openai Gpt 5. 5 is being positioned as useful only if it can fit inside enterprise rules rather than bypass them.

That matters because the next phase of AI adoption is likely to be constrained less by model novelty than by corporate governance. A model that cannot satisfy security teams, audit requirements, and data controls will not spread as quickly as one that can. The NVIDIA rollout suggests that the market is moving toward systems designed for controlled autonomy, not open-ended experimentation.

Openai Gpt 5. 5 and the widening model-stack race

The partnership behind this launch also shows how concentrated the AI stack has become. NVIDIA says the rollout reflects more than 10 years of collaboration with OpenAI, beginning in 2016 when Jensen Huang hand-delivered the first NVIDIA DGX-1 AI supercomputer to OpenAI’s San Francisco headquarters. The companies have since worked across the full AI stack, including NVIDIA’s role as a day-zero partner for OpenAI’s gpt-oss open-weight model launch.

OpenAI has also committed to deploying more than 10 gigawatts of NVIDIA systems for its next-generation AI infrastructure. That is a striking signal of long-horizon dependence: it ties future model training and inference to large-scale physical infrastructure and suggests that frontier AI remains inseparable from hardware supply, energy use, and system efficiency. In that context, Openai Gpt 5. 5 is not only a software release; it is evidence of how model development, compute architecture, and enterprise deployment are becoming one continuous pipeline.

Expert perspective and the broader impact

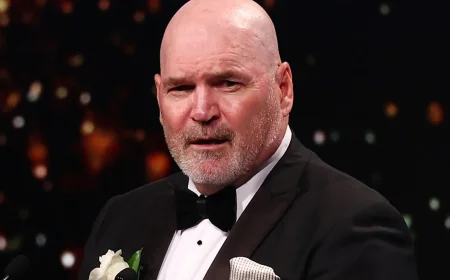

Jensen Huang, NVIDIA’s founder and chief executive, captured the company’s internal posture in an email urging employees to use Codex: “Let’s jump to lightspeed. Welcome to the age of AI. ” The statement is promotional, but it also reflects a real strategic bet: that AI agents will move from developer tools into wider knowledge work. NVIDIA says that is the next frontier, where agents help process information, solve complex problems, generate ideas, and drive innovation.

That broader shift could affect regional and global AI competition in two ways. First, enterprises will likely benchmark competing models less on abstract capability than on deployment efficiency, security, and cost. Second, the infrastructure demands behind systems like Openai Gpt 5. 5 will intensify competition around chips, data center capacity, and power efficiency. The headline may be about a model launch, but the deeper story is about who can make frontier AI dependable enough to operate inside the real economy.

If the next advantage in AI comes from turning powerful models into controlled, enterprise-ready systems, how many more organizations will follow the same path?