Dpr Korea AI hiring ruse exposes a costly gap in remote-work defenses

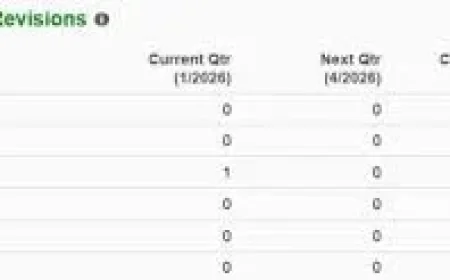

Shock: Microsoft says it disrupted 3, 000 Microsoft Outlook or Hotmail accounts tied to false IT applicants — a signal that dpr korea-linked actors are deploying generative AI across the hiring lifecycle to win remote jobs and siphon wages.

Dpr Korea: What is Microsoft documenting and what is not being told?

Verified facts: Microsoft’s threat intelligence unit documents that actors linked to Pyongyang use generative AI to construct credible false applicants for remote IT and software roles. The unit names clusters Jasper Sleet and Coral Sleet and details techniques including voice-changing software for interviews, the AI app Face Swap to insert faces into stolen identity documents, and AI-generated headshots for CVs. Microsoft states these actors also use AI to create culturally appropriate name lists and matching email address formats to build false identities.

Analysis: These technical tactics are not isolated creativity; they are applied specifically to pass hiring gates and sustain employment. The documented use of voice modification and identity manipulation changes the baseline of trust in remote hiring: interviewers and automated checks that once relied on accent, photo ID, or email formality may now be routinely misled.

How the scheme works and who bears the costs

Verified facts: Microsoft describes a pattern where state-backed fraudsters apply for remote IT work using fabricated identities and local “facilitators” in the target country. Once hired, the false workers use AI to write emails, translate documents, and generate code to delay detection while funneling wages back to North Korea. Microsoft also details that these operators scour job postings on platforms such as Upwork and tailor applications to listed skill requirements. Upwork has stated it takes aggressive action to remove bad actors from its platform.

Analysis: The financial and data risks are twofold. Employers pay legitimate salaries to accounts Microsoft identifies as part of the scheme, and access granted to these accounts can expose intellectual property or internal systems. The documented funneling of wages back to the actor’s state suggests an organized revenue motive rather than opportunistic fraud, turning routine hiring processes into a sustainable illicit funding mechanism.

What the intelligence shows about AI’s role — and what must change

Verified facts: Microsoft’s broader threat intelligence reports that threat actors are using generative AI across reconnaissance, phishing, infrastructure development, malware creation, and post-compromise activity. The unit notes that language models and media-generation tools are being used to draft phishing lures, translate content, summarize stolen data, generate or debug malware, and scaffold scripts. Microsoft links these capabilities directly to the remote IT worker schemes run by groups it tracks.

Analysis: The documented pattern reframes AI from an accelerant to an enabler that lowers the skill barrier for sustained fraudulent access. Technical controls that once focused on malware signatures or anomalous logins must be complemented by hiring-side verification suited to synthetic identities. Microsoft’s disruption of 3, 000 accounts demonstrates capacity for defensive action, but it also shows scale: attackers can regenerate identities and adapt prompts and tools rapidly.

Accountability and next steps (Verified facts + Recommendation): Microsoft’s intelligence identifies the methods and named clusters; Upwork has signaled platform enforcement. Employers should expand verification practices—for example, favoring live video interaction with attention to visual artifacts and light inconsistencies Microsoft identifies as deepfake tells—and treat successful remote hiring as a security control. Policymakers and industry defenders should demand transparency on how AI-enabled identity tools are being abused and support targeted controls that make wage-funneling and identity laundering harder to accomplish.

Final note (Verified observation): The evidence assembled by Microsoft shows that dpr korea-linked operators are weaponizing generative AI to turn remote hiring pipelines into revenue streams and persistent access points. The public should know the documented methods, the named clusters involved, and the concrete defensive steps that can reduce this specific attack vector.