Meta and the Smart Glasses Backlash: 3 Pressure Points Exposing a Privacy Gap

The newest fault line in wearable tech is not battery life or style—it is what happens after a recording ends. meta sits at the center of a widening debate after the UK’s Information Commissioner’s Office (ICO) said it will write to the company over claims that outsourced workers were able to view sensitive content filmed by its AI smart glasses. At the same time, a newly released Android app aims to alert people when someone nearby may be wearing smart glasses, reflecting growing public resistance to always-recording devices.

Meta faces UK regulator questions over smart-glasses data handling

The UK data watchdog, the Information Commissioner’s Office, said it will contact Meta to request information on how it is meeting obligations under UK data protection law. The ICO’s position is explicit: devices processing personal data, including smart glasses, should put users in control and provide appropriate transparency, and service providers must clearly explain what data is collected and how it is used.

This intervention follows a “concerning” report claiming subcontracted workers were able to view sensitive content filmed by the company’s AI smart glasses. Meta has acknowledged that subcontracted workers might sometimes review content—films and images—captured by its AI smart glasses for the purpose of improving the “experience. ” The company also said that when people share content with Meta AI, it sometimes uses contractors to review the data to improve people’s experience with the glasses, and that this is stated in its privacy policy. Meta further said the data is first filtered to protect privacy, and that filtering could include blurring faces in images.

However, people cited in the underlying investigation said that filtering sometimes failed and faces could be seen. The tension here is not merely technical; it turns on user understanding. Meta’s terms indicate that “In some cases Meta will review your interactions with AIs… and this review may be automated or manual (human). ” Yet the practical reality—whether users grasp what “manual (human)” review means for intimate, real-world footage—now sits squarely in the regulator’s frame.

Human review allegations amplify the consent problem in smart-wearable recording

The most destabilizing element of the allegations is their specificity: videos including of glasses-wearers using the toilet or having sex are sometimes reviewed by a Kenya-based subcontractor, based on the investigation referenced in the regulator-triggering report. A worker quoted in that investigation described the breadth of what could be viewed, underscoring how quickly “help improve the experience” can collide with deeply personal exposure.

Meta’s position emphasizes user activation—recording must be activated manually or through a voice command. But the dispute is less about whether a user pressed record and more about downstream pathways: what happens to captured content, who may see it, and how clearly those possibilities are communicated inside long terms and policies. Meta provided its supplemental terms of service when asked, but did not identify which sections covered review of content by human contractors. In a climate of heightened privacy sensitivity around AI devices, that gap becomes a story in itself.

There is also a labor and process layer. The workers described in the investigation were data annotators—teaching AI to interpret images by manually labeling content—employed by a Nairobi-based outsourcing company called Sama, which has a history of work in data annotation. The practical implication is that “review” is not a hypothetical safeguard; it can be a structured workflow involving human exposure to raw or imperfectly filtered material. This is where meta will likely be pressed hardest: not on the concept of improving AI systems, but on the boundaries, controls, and clarity around that improvement pipeline.

A detection app signals escalating public pushback against “luxury surveillance”

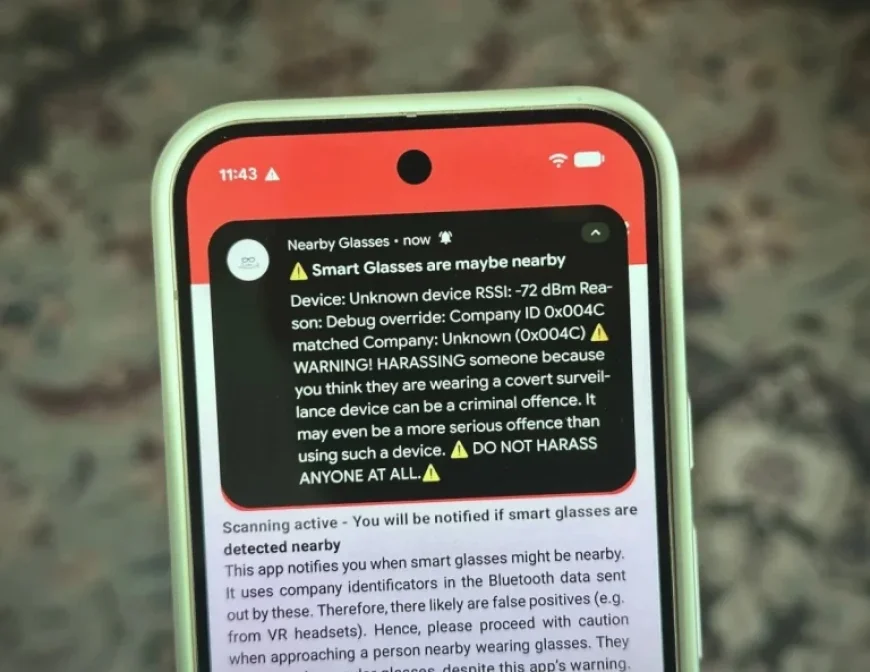

Regulatory scrutiny is arriving alongside consumer countermeasures. An Android app called Nearby Glasses has been introduced with a simple premise: alert the user if someone nearby is wearing smart glasses, or potentially other always-recording tech. The app scans for nearby Bluetooth signals that include a publicly assigned identifier unique to a device manufacturer, and then sends an alert if it detects a signal from a nearby device made by Meta (and Oakley) and Snap. Users can also add their own Bluetooth identifiers to broaden detection.

Its creator, Yves Jeanrenaud, framed smart glasses as an “intolerable intrusion” and described the app as a technical response to a social problem. He also acknowledged constraints, including the potential for false positives—for example, detecting a larger, more obvious virtual reality headset made by the same manufacturer and mistaking it for smart glasses. In a test described alongside the app’s functionality, adding a Bluetooth identifier associated with Apple resulted in a flood of alerts, illustrating that detection works as designed, but also highlighting how noisy proximity-based identification can become in real life.

The emergence of such tools points to a widening “consent perimeter” problem: people near the wearer may worry about being recorded without realizing it, while the wearer may not expect that their content could enter human review processes. Together, these pressures are pushing the debate beyond product features into a governance question: how should wearable AI systems communicate, limit, and verify the handling of bystander and user data once it leaves the device?

For Meta, the near-term issue is regulatory: the ICO’s request for information will put a spotlight on transparency expectations for consumer devices that process personal data. The longer-term issue is social legitimacy: when users and bystanders begin adopting detection tools, a technology’s acceptance can erode even without a formal ban. The next moves—how clearly meta explains human review, how reliably filtering works, and how user control is operationalized—may determine whether smart glasses settle into everyday life or remain an enduring flashpoint.