Claude Code Source Unveils Extensive System Access – The Register

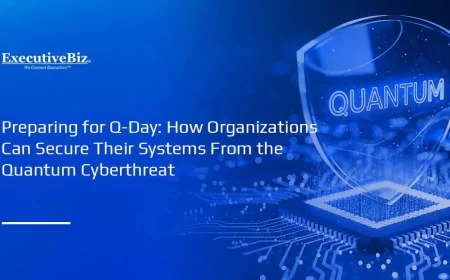

Recent revelations about Anthropic’s Claude Code have raised significant concerns regarding privacy and data security. An analysis of the source code has shown that this AI system possesses extensive access and control over user devices, including potential telemetric capabilities that are not immediately apparent to the end-user.

Legal Disputes and Security Concerns

Anthropic is currently engaged in a lawsuit with the U.S. Department of Defense, known as Anthropic PBC v. U.S. Department of War et al. This legal action stems from the Pentagon’s decision to restrict the company’s AI services, citing potential security risks.

According to the government, there is a “substantial risk” that Claude Code could compromise operations. Anthropic counters these allegations by asserting that it lacks the access and ability to alter its AI models in classified environments. Thiyagu Ramasamy, Anthropic’s head of public sector, emphasized that the company does not control the models once deployed.

Capabilities of Claude Code in Regulatory Environments

In a secure environment, such as a military installation, several measures can prevent Alfred from operating outside its intended scope:

- Direct inference through secure cloud services like Amazon Bedrock GovCloud.

- Blocking data-gathering endpoints with a firewall.

- Disabling automatic updates to the software.

All these precautions require careful implementation, yet the vulnerability for non-classified users remains significant.

Potential Risks for General Users

For users operating in non-secure environments, Claude Code harbors extensive capabilities that raise privacy concerns. The following are key points regarding data collection:

- Anthropic can capture user prompts and responses transmitted through their API.

- Unreleased features, such as KAIROS and CHICAGO, allow the AI to execute commands and interact with system components without user awareness.

- Persistent telemetry is active, which gathers extensive data on user interactions and system configurations.

Additionally, the autoUpdater function ensures that the software can frequently refresh its settings and apply updates without direct user consent.

Data Retention Policies

Anthropic retains user-generated data for different periods based on account types:

- Free/Pro/Max users: Data kept for five years if users consent to it being used for training, or for 30 days otherwise.

- Commercial users (Team, Enterprise, API): Standard retention of 30 days, with an option for zero data retention.

This layered data retention and collection can lead to significant privacy issues for users unaware of these practices.

Stealthy Operations in Open-Source Contributions

Interestingly, Anthropic incorporates measures to obscure its authorship from open-source efforts that disallow AI contributions. Internal instructions suggest that developers must keep proprietary information out of public commits.

The Enigmatic Melon Mode

A curious absence in the current code is the “Melon Mode,” previously identified in reverse-engineered versions. This feature might have facilitated advanced operations, although Anthropic maintains that it constantly evaluates various prototypes for future release.

As the landscape of AI technologies evolves, the implications of Anthropic’s Claude Code call for a deeper examination of ethical standards and security practices to protect user data.