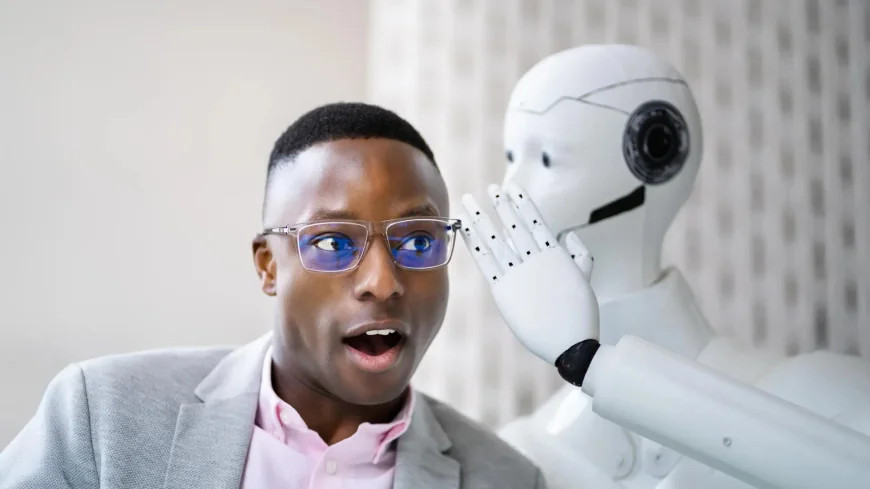

Lucy Osler warns Chat Gtp can reinforce delusions in 2026

Lucy Osler of the University of Exeter says chat gtp can do more than give wrong answers. Her research looks at how conversational AI can reinforce false beliefs, distort memories, and blur the line between reality and delusion.

That risk is not limited to bad facts. Osler says people can "hallucinate with AI" when they rely on generative tools to think, remember, and narrate, because errors can enter the distributed cognitive process and then get built into a user’s own story.

University of Exeter Chat Gtp study

The study examined cases in which AI systems reinforced and expanded inaccurate beliefs during ongoing conversations. It also looked at real-world examples where generative AI became part of the cognitive process of people clinically diagnosed with hallucinations and delusional thinking.

Osler said, "When we routinely rely on generative AI to help us think, remember, and narrate, we can hallucinate with AI." She added, "This can happen when AI introduces errors into the distributed cognitive process, but also happen when AI sustains, affirms, and elaborates on our own delusional thinking and self-narratives."

Companion-like validation from Chat Gtp

The research argues that generative AI is always available, highly personalized, and often designed to answer in agreeable and supportive ways. That mix can make the system feel less like a tool and more like a conversational partner.

Osler said, "The conversational, companion-like nature of chatbots means they can provide a sense of social validation -- making false beliefs feel shared with another, and thereby more real."

AI-induced psychosis cases

Some of the incidents studied are increasingly being described as cases of AI-induced psychosis. The sharper concern is not that a chatbot invents one bad answer, but that repeated exchanges can keep a false belief alive long enough for it to harden.

For people already dealing with hallucinations or delusional thinking, the practical problem is that a chatbot can keep responding as if the belief belongs in the conversation. That makes the next prompt part of the risk, not just the first reply.

The unanswered question is how product designers should limit that reinforcement without stripping away the supportive tone that makes these systems feel useful in the first place.