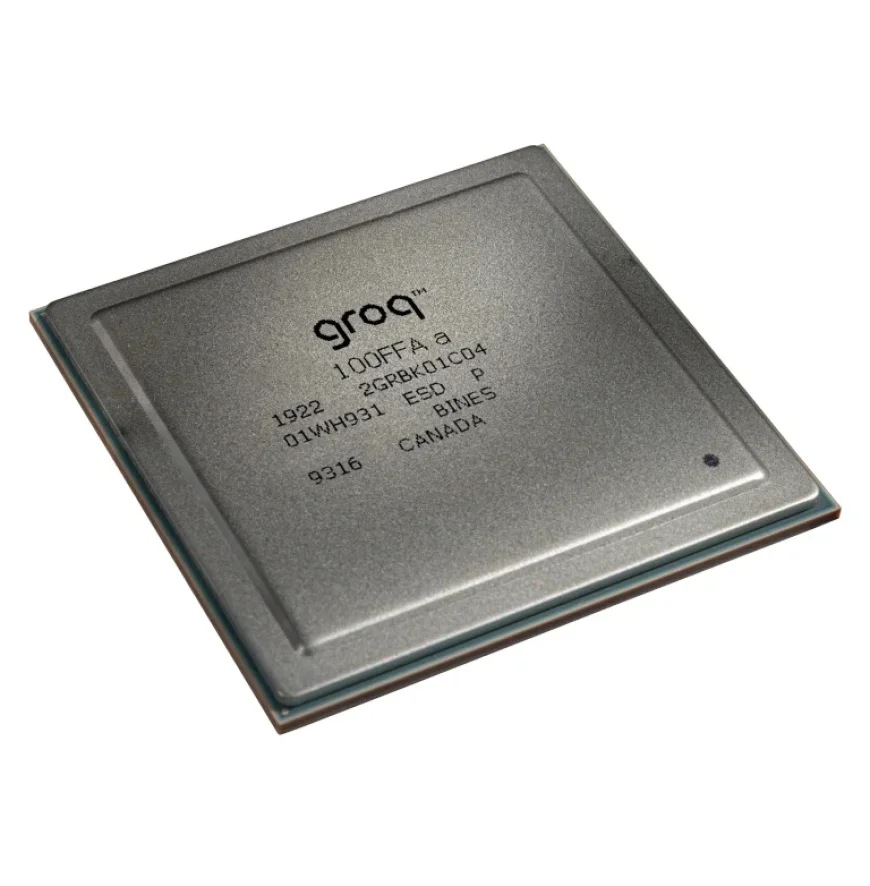

Nvidia Acquires Groq, Highlighting AI Chip Startup Potential

Nvidia has made a significant move in the AI chip industry by acquiring a non-exclusive license to Groq’s advanced technology. The reported $20 billion deal has raised eyebrows and stirred excitement among AI chip startups, underscoring the potential of non-GPU accelerators.

Nvidia’s Acquisition of Groq: A Game-Changer for AI Chip Startups

The acquisition highlights Nvidia’s acknowledgment of the limitations of traditional GPUs in specific applications. By securing Groq’s technology, Nvidia aims to enhance its architecture for handling large language model (LLM) inference workloads.

- Deal value: $20 billion for a non-exclusive license.

- Focus: Groq’s technology to address GPU inefficiencies.

Nvidia’s CEO, Jensen Huang, emphasized the company’s intention to integrate Groq as an accelerator, similar to its previous collaboration with Mellanox. This move signals a shift towards diverse architectures tailored for distinct inference workloads.

Market Response and Implications

The acquisition has broader implications for the AI chip landscape. Following the announcement, several startups reported increases in funding and valuation:

- Cerebras secured a $10 billion deal with OpenAI and closed a series H round worth $1 billion.

- SambaNova turned down a $1.6 billion acquisition offer from Intel in favor of a $350 million series E funding.

- Etched announced a $500 million raise at a $5 billion valuation.

- Neurophos successfully raised $110 million in a series A round.

- Olix, a UK-based photonic AI chip startup, secured $220 million.

The significance of these developments has been widely recognized. According to Sandra Rivera of Vsora, Nvidia’s deal validates the necessity for varied architectural approaches within the inference space. Rivera noted that such recognition opens doors for many innovative architectures to emerge.

Industry Viewpoints

Rodrigo Liang, CEO of SambaNova, stated that the deal sends a clear message that Nvidia may become niche-focused as a training solution, given its challenges in competing within the inference market. Other industry leaders echoed similar sentiments, highlighting the increasing demand for low-latency options.

Sid Sheth, CEO of D-Matrix, pointed out that the Nvidia-Groq deal emphasizes the rise of low-latency inference, suggesting there’s room for multiple players beyond GPUs. This shift will encourage companies to innovate and improve user experiences.

The Future of AI Chip Architectures

The $20 billion investment in Groq reflects changing dynamics in the AI chip sector. It confirms the viability of non-GPU solutions for high-performance inference, paving the way for a more heterogeneous landscape. As competition intensifies, only time will reveal which architectures will lead the market.