TurboQuant AI Slashes LLM Memory Usage by Sixfold

Recent advancements in AI technology have brought major improvements in memory efficiency for large language models (LLMs). Google Research has introduced TurboQuant, a compression algorithm designed to significantly reduce memory usage while enhancing processing speed and maintaining output accuracy.

TurboQuant’s Impact on Memory Usage

TurboQuant focuses on decreasing the size of the key-value cache integral to LLMs. This cache acts like a “digital cheat sheet,” storing critical information and avoiding the need for recomputation. Notably, LLMs rely on vectors to represent semantic meanings of tokenized text, and these vectors, often high-dimensional, can consume substantial memory.

Key Features of TurboQuant

- Performance Boost: TurboQuant has demonstrated up to an 8x increase in performance during initial tests.

- Memory Reduction: The algorithm reportedly reduces memory usage by sixfold.

- No Quality Loss: Initial results indicate preservation of output quality despite the significant efficiency gains.

Understanding the Technical Process

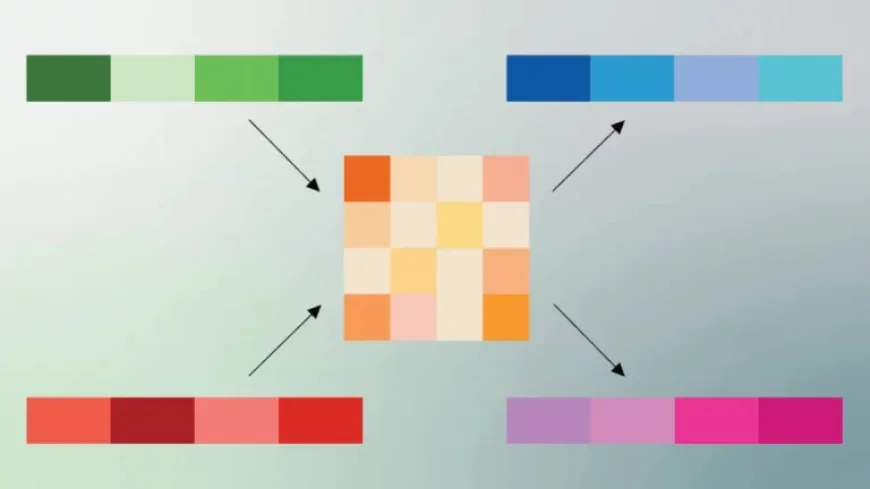

The implementation of TurboQuant involves a two-step approach. Central to this process is PolarQuant, a system developed by Google. This innovative method transforms vectors from standard XYZ coordinates to polar coordinates.

Using PolarQuant for Efficiency

With PolarQuant, vectors are simplified to two key components: a radius that reflects core data strength and a direction representing the data’s meaning. This method enhances the overall efficiency of LLMs, allowing for more compact data representation.

As companies increasingly leverage AI technologies, tools like TurboQuant are instrumental in optimizing performance and resource management. The growing need for efficient data processing will likely drive further innovations in this field.