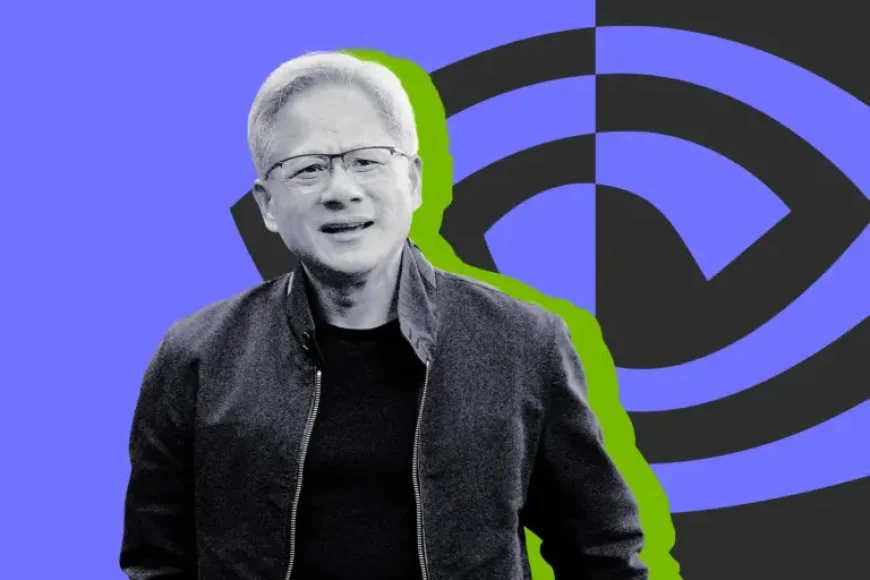

Agi, and the moment Jensen Huang said the future is “now”

On a Monday episode of Lex Fridman’s podcast, Nvidia CEO Jensen Huang leaned into one of technology’s most loaded questions: whether agi has arrived. “I think it’s now. I think we’ve achieved AGI, ” he said—then, moments later, he narrowed the promise with a caveat that changed the temperature in the room.

What did Jensen Huang say about Agi, and why did he walk it back?

Huang’s statement landed as a headline-grabbing answer to Fridman’s framing of artificial general intelligence: an AI system able to “essentially do your job, ” including starting, growing, and running a successful technology company worth more than $1 billion. Fridman asked for a timeline—five, 10, 15, or 20 years—and Huang answered that it is happening now.

But Huang did not leave the idea standing at full height. In the same conversation, he pointed to OpenClaw, an open-source AI agent platform, describing its viral success and the variety of ways people are using individual AI agents. He sketched out the kinds of unexpected outcomes he could imagine: a digital influencer, a social application, or even something as whimsical as an app that “feeds your little Tamagotchi, ” hitting popularity seemingly out of nowhere.

Then came the counterweight. Huang described how many such tools burn bright and fade: “A lot of people use it for a couple of months and it kind of dies away. ” And he drew a bright line around what those agents are unlikely to do. “The odds of 100, 000 of those agents building Nvidia is zero percent, ” he said.

Why does defining agi matter more than the claim itself?

The friction in Huang’s comments comes down to definition—what counts as “general, ” what counts as “intelligence, ” and what counts as a real-world threshold rather than a demo. In the conversation with Fridman, the definition is not a formal test; it is a capability benchmark tied to work and value creation.

Fridman’s own definition is intentionally ambitious: an AI that can build and run a billion-dollar technology company. Huang’s answer, as discussed by General Assignments Editor Chance Townsend of Mashable, leaned on a narrower interpretation of what Fridman asked. In that reading, the AI does not need to create something lasting or sustain an institution over time. As Huang put it to Fridman: “You said a billion, and you didn’t say forever. ”

This is why the debate around AGI often feels like two conversations happening at once. One side treats the term as a shorthand for human-level, economy-shaping capability. The other treats it as a moving threshold—something you can reach by choosing the right yardstick. Even in Huang’s telling, the difference between a one-time viral win and durable, organizational competence is not a footnote; it is the central distinction.

What kind of “now” was Huang describing—viral apps or lasting institutions?

Huang’s picture of “now” does not require a machine to replicate the slow, compound craft of building a company that survives. It is closer to a story of scale without permanence: an AI that makes a simple web service, goes viral, gets used by billions, and then folds. He pointed to the dot-com era as precedent, arguing that many websites from that period were not more sophisticated than what an AI agent could generate today.

In that framing, the human drama is not just technological—it is economic and psychological. Viral success is seductive because it looks like destiny, but it often behaves like a weather system: intense, sudden, and gone before anyone can name what exactly made it happen. Huang’s own caveat—zero percent odds of agents building Nvidia—signals that, in his view, the “institutional intelligence” required to create and sustain a company like his remains out of reach.

That gap matters because the word “agi” carries consequences beyond marketing. The term has been tied to key clauses in big-ticket contracts between companies such as OpenAI and Microsoft, where significant amounts of money may hinge on whether a threshold is reached. At the same time, tech leaders have attempted to distance themselves from the phrase and introduce alternatives they consider less over-hyped and more clearly defined—though, in practice, those alternatives often point to similar aspirations.

How does Nvidia’s real-world influence shape the agi conversation?

Huang is not only a speaker in a debate; he leads a company with tangible reach across industries. MotorTrend, which named Huang its 2026 Person of the Year, described Nvidia as an “artificial intelligence chip giant” and highlighted how its hardware and software have become central to the auto industry’s shift toward smart, software-defined vehicles.

MotorTrend described Nvidia’s evolution from early graphics ambitions—founded in 1993 by Huang and two other engineers—to the development of GPUs that pushed gaming forward, and then to an AI powerhouse with a software stack and developer ecosystem “with deep learning at its core. ” In the automotive sphere, it described Nvidia supplying hardware and software used to create smarter vehicles, enabling faster and cheaper design and testing through virtual evaluation before cars hit real streets, and supporting factory planning, robotics, and vision systems.

The same account described Nvidia’s role in infotainment and driver monitoring, and said the company now has the intelligence needed to scale self-driving, software-defined vehicles with over-the-air update capability. MotorTrend also gave a concrete example: Mercedes-Benz working with Nvidia on the 2026 CLA and MB. OS, and building Drive Assist Pro using a combination of real-world and virtual data to generate scenarios and simulations—turning a single incident into hundreds of thousands of miles of test driving.

That is the human reality behind the abstract term: when executives debate AGI on a podcast, people in multiple industries are already living with the downstream effects of AI platforms as tools—inside vehicles, design labs, factories, and software stacks.

What responses are emerging as leaders argue over agi?

One response is linguistic: a push by tech leaders to avoid the baggage of “AGI” and introduce clearer terms. Another is practical: the rise of agent platforms like OpenClaw that invite experimentation by letting people spin up individual agents to try to do real tasks, even if many projects fade after a brief surge.

And a third response is caution embedded inside ambition. Huang’s own remarks contain both the spark—“it’s now”—and the restraint—agents are not building Nvidia. That tension, more than any single claim, reflects the moment: widespread experimentation, big expectations, and an unresolved question about whether short-lived virality should be treated as proof of general intelligence or simply as a new form of rapid prototyping.

Back on Fridman’s podcast, the room held two truths at once: Huang’s insistence that the threshold has arrived, and his admission of a ceiling that still stands. If agi is “now, ” the world will still have to decide what kind of “now” it means—one that flashes, or one that lasts.

Image caption (alt text): Jensen Huang discusses agi during a conversation about AI agents and the meaning of AGI.