Gpt-Rosalind and the 3 reasons OpenAI is narrowing biology AI

Gpt-Rosalind signals a sharper turn in laboratory AI: instead of a broad science model, OpenAI is offering a system trained on common biology workflows and limiting who can use it. The move matters because the company is not only aiming to help researchers process enormous biological datasets, but also to make the model more cautious in a field where a confident wrong answer can mislead work on pathways, proteins, and drug targets. That combination of specialization and restraint defines the release.

Why Gpt-Rosalind matters right now

The timing reflects a practical problem in modern biology. OpenAI says current researchers face two main bottlenecks: vast datasets built over decades of genome sequencing and protein biochemistry, and a field broken into highly specialized subfields with distinct techniques and jargon. In that setting, Gpt-Rosalind is designed to help bridge knowledge gaps without pretending that every biology task is the same. The model is available in closed access, and only U. S. -based entities can apply to the trusted access deployment structure at this stage.

That limited rollout is not a small detail. It shows the company sees immediate usefulness, but also real risk. OpenAI has said the model could produce harmful outputs if misused, including for tasks such as optimizing a virus’s infectivity. In other words, the same capacity that might help prioritize drug targets also demands tighter controls. The biology focus is therefore not just a product choice; it is a governance choice.

What lies beneath the biology-first design

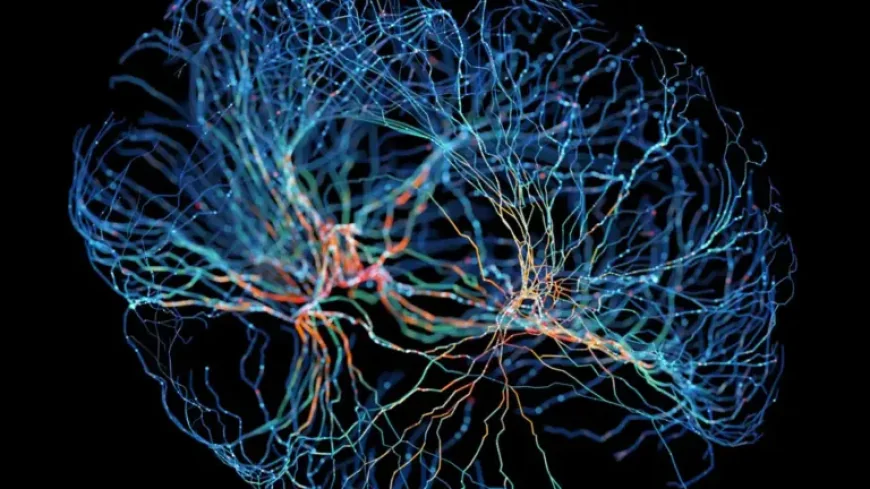

OpenAI’s life sciences product lead, Yunyun Wang, said the system was trained on 50 of the most common biological workflows and on how to access major public databases of biological information. That is the core of the strategy: rather than asking a general-purpose system to improvise across life sciences, the company is narrowing the task set to routines researchers actually use. Wang said the model can suggest likely biological pathways and prioritize potential drug targets, while connecting genotype to phenotype through known pathways and regulatory mechanisms.

That wording matters because it suggests a mechanistic ambition, not just a text-generation tool. OpenAI is positioning Gpt-Rosalind as something that can infer likely structural or functional properties of proteins and help surface connections that a human researcher might miss in a large literature base. Yet the company is also trying to reduce the model’s tendency toward sycophancy and overenthusiasm by making it more skeptical. That is important in biology, where a model that flatters a hypothesis can be worse than one that challenges it.

Still, uncertainty remains. OpenAI has not clearly addressed whether the hallucination problem has been fully handled, and that is a major limitation for any system meant to assist scientific judgment. If a model can confidently produce an erroneous pathway or misleading explanation, its usefulness can fall quickly. For that reason, the value of Gpt-Rosalind will ultimately depend less on its framing and more on whether it consistently improves research decisions in practice.

Expert perspectives on Gpt-Rosalind

Wang framed the model as a tool for working through complex, multi-step processes and said its “expert-level” characterization comes from benchmark performance. That distinction is meaningful: reasoning suggests process, while expert-level suggests measured performance on selected tests. The difference matters because science users need more than fluent language; they need a system that can support careful judgment under uncertainty.

OpenAI’s own description also places the model within a broader pattern in science AI, though it says other companies have tended to build more generic systems. Gpt-Rosalind is different because it is biology-specific. That specialization may give it an advantage in relevance, but it may also make its limitations easier to spot if the model fails outside its narrow lane.

Regional and global impact of a closed-access biology model

The immediate reach is restricted, but the broader implications are global. If biology-tuned systems prove useful, they could reshape how labs handle literature review, pathway analysis, and target prioritization. If they do not, the field may stay stuck with broader tools that can span disciplines but lack depth in any one area. Either way, Gpt-Rosalind highlights a larger shift: life sciences AI is moving from generic assistance toward workflow-specific systems.

The access rules also suggest an early effort to balance innovation with containment. OpenAI plans to make a more limited Life Sciences Research Plugin generally available, but the flagship model stays tightly controlled for now. That split hints at a cautious rollout strategy: broaden exposure to lower-risk functions while limiting higher-stakes capabilities until the model’s behavior is better understood.

For researchers, the real question is whether a biology-specific model can consistently turn complexity into clarity without amplifying errors. And for the industry, the deeper test is whether Gpt-Rosalind becomes a template for safer scientific AI, or simply another reminder that specialization alone does not guarantee trust.