Trump Orders U.S. Administration to Halt Anthropic AI Use

The Pentagon has officially selected OpenAI’s artificial intelligence models over those of Anthropic, which had previously refused to provide unrestricted access to the U.S. military citing ethical concerns. The announcement came after Donald Trump ordered his administration to immediately cease any use of Anthropic’s AI, named Claude.

Details of the Decision

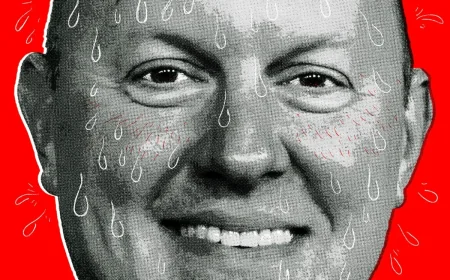

On Friday, OpenAI’s CEO Sam Altman confirmed the agreement with the Department of Defense on social media platform X. This deal allows the deployment of OpenAI’s models within the Pentagon’s classified network. Altman emphasized the inclusion of specific guidelines aimed at preventing mass surveillance and ensuring human accountability in the usage of force, particularly in autonomous weapon systems.

Trump’s Stance on Anthropic

Earlier, Trump criticized Anthropic, stating, “We don’t need them, we don’t want them, and we will no longer work with them.” He expressed concerns that the company’s actions could endanger American lives and national security. Trump’s comments were made on his platform, Truth Social, where he cited “radical left” companies as inappropriate influences on the military.

Response from Anthropic

In the wake of Trump’s remarks, Defense Secretary Pete Hegseth accused Anthropic of “betrayal” and dismissed the company from any current or future military collaboration. Anthropic expressed disappointment over this decision, labeling it legally unfounded and potentially damaging to other American companies negotiating with the government. The firm has vowed to pursue legal action.

Ethical Considerations in AI

- Anthropic insisted that unrestricted military use of Claude contradicts democratic values.

- CEO Dario Amodei highlighted that today’s advanced AI systems lack the reliability necessary for direct control over lethal weapons.

- He noted that AI should be deployed with appropriate safeguards that are currently not in place.

Founded in 2021 by former OpenAI employees, Anthropic has maintained a commitment to ethical AI development. This commitment includes a set of guiding principles laid out in a document referred to as a “constitution,” introduced in early 2026. This framework aims to prevent any actions that pose inappropriate dangers.

Conclusion and Future Outlook

As OpenAI moves forward under the new agreement with the Pentagon, Altman has urged the Department of Defense to adopt similar conditions for all AI companies. He expressed a desire for peaceful resolutions that steer clear of legal disputes and promote reasonable agreements in the use of artificial intelligence.